I know this isn’t about that but whenever I read a headline about Intel I’m reminded to be thankful for having these fucks as the only thing that could challenge the GPU duopoly. very encouraging.

Legacy software and games mean that ARM PCs will never be anything more than a niche curiosity.

The ISA wars are long over, and x86 won time and time again.

I disagree. Legacy software and games can run through translation layers. We already do that with windows software on Linux.

Maintained software doesn’t really have an excuse not to support ARM, unless the developers are woefully incompetent/lazy/personally biased against supporting it.

We already do that with windows software on Linux.

Translating syscalls and translating opcodes (especially efficiently) are different things.

And we don’t.

But yes, this is possible and Windows for ARM includes such a translation layer. Except it’s not very good yet.

In some sense ARM everywhere is a nightmare. There’s no standard like EFI or OpenFirmware for ARM PCs.

I hope that changes.

Still no Discord ARM app…

The app for MacOS? On apple silicon doesn’t use Rosetta any longer I believe

Definitely not on Windows for ARM. Idk about Mac

Discord has gotten way too big for its own good and only focuses on getting people to subscribe to nitro.

There is no excuse for them, just plain greed and laziness.

The thing is, for the Windows ecosystem, ARM doesn’t have a good “hook”.

When tablets scared the crap out of Intel and Microsoft back in the Windows 7 days, we saw two things happen.

You had Intel try to get some android market share, and fail miserably. Because the Android architecture was built around ARM and anything else was doomed to be crappier for those applications.

You had Microsoft push for Windows on ARM, and it failed miserably. Because the windows architecture was built around x86 and everything else is crappier for those applications.

Both x86 and windows live specifically because together they target a market that is desperate to maintain application compatibility for as much software without big discontinuities in compatibility over time. A transition to ARM scares that target market enough to make it a non starter unless Microsoft was going to force it, and they aren’t going to.

Software has plenty of reason not to bother with windows on arm support because virtually no one has those devices. That would mean extra work without apparent demand.

ARM is perfectly capable, but the windows market is too janky to be swayed by technical capabilities.

My M1 and M3 beg differ.

Your Apple crap aren’t PCs according to its own marketing.

Not to mention my 9950X could curbstomp both.

tech tribalism at its best

My company bought 5 snapdragon laptops to test - ended up returning all of them. They’re not bad per se, the operating system that they’re expected to run is. Windows for ARM has a looong way before it is production ready. Their biggest hurdle is the translation layer (similar to Rosetta 2 which works near flawlessly) that is so bad that if your program doesn’t have a native ARM build, you’re better off not even bothering. I’ve seen an article indicating that they improved it a lot in the current Windows insider build but we’ve already returned the laptops and switched over to AMD. In my opinion if Microsoft truly cares about Windows on ARM then it will be ready in a year or so. If they don’t… probably 2-3.

As per Linux, it works great, but that’s because most of the packages are FOSS and so compiling them for ARM doesn’t take a lot of effort. Sadly, Security at our company insists we run Windows so that

spywareantivirus software can be installed on all end user machines.Fun fact antivirus or spyware as you call it can also be installed on Linux.

It’s probably also easier and can likely be done more invasively considering that the company can control every step like the kernel and even app distribution.

While true, not all vendors support Linux, which is the case for myself.

Not to mention that we Linux usersvare kind of against sandboxing apps. Which keeps us some what behind on desktop stuff

Don’t ask for permission if most of what you do can be run from web apps. Its worked for me for a couple of years, I just can’t call IT :)

It’s* worked for me

Yeah if you did this in my company… install linux on a machine that we installed windows on. I will get you fired and hand over everything I can possibly get to HR for them to do whatever else. You don’t fuck with my infrastructure. Use your big boy adult skills and request/requisition a linux machine so that it can be done properly.

Company computers are not yours. They don’t belong to you. The people who are ultimately responsible for the company security posture don’t work for you. Sabotaging policy that’s put in place is the fastest way to get blacklisted in my industry especially since we must maintain our compliance with a number of different bodies otherwise the company is completely sunk.

I didn’t down vote you but honestly interested in an adult conversation regarding your stance. If all I use is MS365 and I can use it in a web app with full 2FA how am I a security risk? I can access all the same things on my personal laptop, nothing is blocked, so how is Linux different?

The adult conversation would begin with you don’t get to change things about stuff that you don’t own without permission from the owner, it’s not yours. It belongs to the company. Materially changing it in any way is a problem when you do not have permission to do so.

Most of this answer would fully depend on what operations the company actually conducts. In my case, our platform has something on the order of millions of records of background checks, growing substantially every day. SSNs, Court records, credit reports… very long list of very very identifiable information.

Even just reinstalling windows with default settings is an issue in our environment because of the stupid AI screen capture thing windows does now on consumer versions.

I’m a huge proponent of Linux. Just talk to the IT people in your org… many of them will get you a way to get off the windows boat. But it has to still be done in a way that meets all the security audits/policies/whatever that the company must adhere to. Once again, I deal a lot with compliance. If someone is found to be out of compliance willingly, we MUST take it seriously. Even ignoring the obvious risk of data leakage, just to maintain compliance with insurance liability we have to take documented measures everywhere.

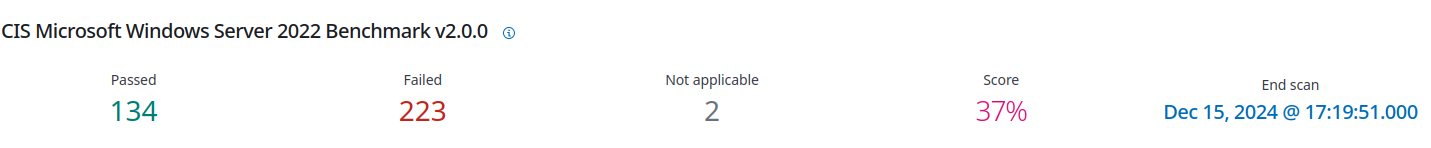

Many defaults linux installs don’t meet policy minimums, here’s an example debian box in a testing environment with default configurations from the installer. Which is benched against this standard https://www.cisecurity.org/benchmark/debian_linux.

Endpoint security would be missing for your laptop if you jumped off our infrastructure. Tracking of assets would be completely gone (eg, stolen assets. Throwing away the cost of the hardware and risking whatever data that happens to be on the device to malicious/public use). File integrity monitoring. XDR services.

Did I say that the device isn’t yours? If not, I’d like to reiterate that. It’s not yours. Obtaining root, or admin permissions on our device means we can no longer attest that the device is monitored for the entire audit period. That creates serious problems.

Edit: And who cares about downvotes? But I know it wasn’t you. It was a different lemmy.world user. Up/Downvotes are not private information.

Edit2: Typo’s and other fixings that bothered me.

Edit3: For shits and giggles, I spun up a CLI only windows 2022 server (we use these regularly, and yes you can have windows without the normal GUI) and wanted to see what it looks like without our hardening controls on it… The answer still ends up being that all installs need configuration to make them more secure than their defaults if your company is doing anything serious.

Exactly. And this is why I refuse to work at companies like yours.

It’s nothing personal, but I don’t want to work somewhere where I have to clear everything with an IT department. I’m a software engineer, and my department head told IT we needed Macs not because we actually do, but because they don’t support Macs so we’d be able to use the stock OS. I understand that company equipment belongs to the company, not to me, but I also understand that I’m hired to do a job, and dealing with IT gets in the way of that.

I totally appreciate the value that a standard image provides. I worked in IT for a couple years while in school and we used a standard image with security and whatnot configured (even helped configure 802.1x auth later in my career), so I get it. But that’s my line in the sand, either the company trusts me to follow best practices, or I look elsewhere. I wouldn’t blatantly violate company policy by installing my own OS, I would just look for opportunities that didn’t have those policies.

Exactly. And this is why I refuse to work at companies like yours.

Then good luck to you?

But you seemed to have missed the point. The images I share, are an SCA (Security Configuration Assessment)… They’re a “minimum configuration” standard. Not a standard image. Though that SCA does live as standard images in our virtualized environments for certain OSes. I’m sure if we had more physical devices out in the company-land we’d need to standardize more for images that get pushed out to them… But we don’t have enough assets out of our hands to warrant that kind of streamline.

I’m a huge proponent of Linux. Just talk to the IT people in your org… many of them will get you a way to get off the windows boat. But it has to still be done in a way that meets all the security audits/policies/whatever that the company must adhere to.

I literally go out of my way to get answers for folks who want off the windows boat. Go have a big boy adult conversation with your IT team. I’m linux only at home (to the point where my kids have NEVER used windows[especially these days with schools being chromium only]. And yes, they use arch[insert meme]), I’ve converted a bunch of our infra to linux that was historically windows for this company. If anyone wanted linux, I’d get you something that you’re happy with that met our policies. Your are outright limiting yourself to workplaces that don’t do any work in any auditable/certified field. And that seems very very short-sighted, and a quick way to limit your income in many cases.

But you do you. My company’s dev team is perfectly happy. I would know, since I also do some dev work when time allows and work with them directly, regularly. Hell most of them don’t even do work on their work issued machines at all (to the point that we’ve stopped issuing a lot of them at their request) as we have web-based VDI stuff where everything happens directly on our servers. Much easier to compile something on a machine that has scalable processors basically at a whim (nothing like 128 server cores to blast through a compile) all of those images meet our specs as far as policy goes. But if you’re looking to be that uppity annoying user, then I am also glad that you don’t work in my company. With someone like you, would be when we lose our certification(s) during the next audit period or worse… lose consumer data. You know what happens when those things happen? The company dies and you and I both don’t have jobs anymore. Though I suspect that you as the user who didn’t want to work with IT would have a harder time getting hired again (especially in my industry) than I would for fighting to keep the companies assets secure… but that one damn user (and their managers) just went rogue and refused to follow policies and restrictions put in place…

I’m a software engineer, and my department head told IT we needed Macs not because we actually do, but because they don’t support Macs so we’d be able to use the stock OS.

No you don’t. There is no tool that is Mac-only that you would need where there is no alternative. This need is a preference or more commonly referenced as a “want”… not a need. Especially modern M* macs. If you walked up to me and told me you need something… and can’t actually quantify why or how that need supersedes current policy I would also tell you no. An exception to policy needs to outweigh the cost of risk by a significant margin. A good IT team will give you answers that meet your needs and the company’s needs, but company’s needs come first.

either the company trusts me to follow best practices, or I look elsewhere

So if I gave you a link to a remote VM, and you set it up the way you want. Then I come in after the fact and check it against our SCA… you’d score even close to a reasonable score? The fact that your so resistant to working with IT from the get-go proves to me that you would fail to get anywhere close to following “best practices”. No single person can keep track and secure systems these days. It’s just not fucking possible with the 0-days that pop out of the blue seemingly every other fucking hour. The company pays me to secure their stuff. Not you. You wasting your time doing that task inefficiently and incorrectly is a waste of company resources as well. “Best practice” would be the security folks handle the security of the company no?

I’m linux only at home (to the point where my kids have NEVER used windows

Same.

I honestly don’t think this issue has anything to with our staff, but our corporate policies. Users can’t even install an alternative browser, which is why our devs only support Chrome (our users are all corporate customers).

My issue has less to do with Windows (unacceptable for other reasons), but with lack of admin access. Our IT team eventually decided to have us install some monitoring software, which we all did while preserving root access on our devices.

I would honestly prefer our corporate laptops (ThinkPads) over Apple laptops, but we’re not allowed to install Linux on them and have root access because corporate wants control (my words, not theirs).

web-based VDI stuff where everything happens directly on our servers

I don’t know your setup, but I probably wouldn’t like that, because it feels like solving the wrong problem. If compile times are a significant issue, you probably need to optimize your architecture because your app is probably a monolithic monster.

I like cloud build servers for deployment, but I hate debugging build and runtime issues remotely. There’s always something that remote system is missing that I need, and I don’t want to wait a day or two for it to get through the ticket system.

lose consumer data

Customer data shouldn’t be on dev machines. Devs shouldn’t even have access to customer data. You could compromise every dev machine in our office and you wouldn’t get any customer data.

The only people with that access are our devOPs team, and they have checks in place to prevent issues. If I want something from prod to debug an issue, I ask devOPs, who gets the request cleared by someone else before complying.

I totally get the reason for security procedure, and I have no issue with that. My issue is that I need to control my operating system. Maybe I need to Wireshark some packets, or create a bridge network connection, or do something else no sane IT professional would expect the average user to need to do, and I really don’t want to deal with submitting a ticket and waiting a couple days every time I need to do something.

There is no tool that is Mac-only that you would need where there is no alternative

Exactly, but that’s what we had to tell IT so we wouldn’t have to use the standard image, which is super locked down and a giant pain when doing anything outside the Microsoft ecosystem. I honestly hate macOS, but if I squint a bit, I can make it almost make it feel like my home Linux system. I would’ve fought with IT a bit more, but that’s not what my boss ended up doing.

We run our backend on Linux, and our customers exclusively use Windows, so there’s zero reason for us to use macOS (well, except our iOS builds, but we have an outside team that does most of that). Linux would make a ton more sense (with Windows in a VM), but the company doesn’t allow installing “unofficial” operating systems, and I guess my boss didn’t want to deal with the limited selection of Linux laptops. I’m even willing to buy my own machine if that would be allowed (it’s not, and I respect that).

If our IT was more flexible, we’d probably be running Windows (and I wouldn’t be working there), but we went with macOS. Maybe we could’ve gotten Linux if we had a rockstar heading the dept, but our IT infra is heavy on Windows, so we’re pretty much the only group doing something different (corporate loves our product though, and we’re obsoleting other in-house tools).

The fact that your so resistant to working with IT from the get-go proves to me that you would fail to get anywhere close to following “best practices”.

No, I’ve just had really bad experiences with IT groups, to the point where I just nope out if something seems like a potential nightmare. If infra is largely Microsoft, the standard issue hardware runs Windows, and the software group that I’m interviewing with doesn’t have special exceptions, I have to assume it’s the bog standard “IT groups calls the shots” environment, and I’ll nope right on out. For me, it’s less about the pay and more about being able to actually do my job, and I’ll take a pay cut to not have to deal with a crappy IT dept.

I’m sure there are good IT depts out there (and maybe that’s yours), but it’s nearly impossible to tell the good from the bad when interviewing a company. So I avoid anything that smells off.

t’s just not fucking possible with the 0-days that pop out of the blue seemingly every other fucking hour.

Yet, I’ve pointed out several security issues in our infra managed by a professional IT team, from zero days that could impact us to woefully outdated infra. I’m not perfect and I don’t believe anyone is, but just being in the IT position doesn’t mean you’re automatically better at keeping up with security patches.

I’m usually the first to update on our team (I’m a lead, so I want to catch incompatibilities before they halt development), and I work closely with our internal IT team to stay updated. In fact, just Friday I asked about some potential concerns, and it turns out we were running into resource limits on devices hosted on Linux OSes that were already out of the security update window. So two issues caught by curiosity about something I saw in the code as it relates to infra I can’t (and shouldn’t) access. I don’t blame our team (they’re always understaffed IMO), but my point here is that security should be everyone’s concern, not just a team who locks down your device so you can’t screw the things up.

If everything is exactly the same, everything will be compromised at the same time, so some variation (within certain controls) is a good thing IMO. Yet top down standardization makes that implausible.

The company pays me to secure their stuff. Not you.

The company also pays me to write secure, reliable software, and I can’t do that effectively if I can’t install the tools I need.

Yes, IT professionals have their place, and IMO that’s on the infra side, not the end-user machine side. So set up the WiFi to block direct access between machines, segment the network using VLANs to keep resources limited to teams that need them, put a boundary between prod (Ops) and devs to contain problems, etc. But don’t take away my root access. I’m happy to enable a system report to be sent to IT so they can check package versions and open ports and whatnot, but let me configure my own machine.

As somebody who has done this unofficially for 10 years… Don’t do it. For the entirety of those 10 years IT knew something was odd with my computer (they didn’t see, IT was India based) but they couldn’t be bothered to do anything about it.

In a proper company they will know and swiftly act.

Please don’t do this with a company machine.

This will at the best get you fired, worst sued.

Or imprisoned, depending on the industry and the risk you just caused.

This is such an awful idea.

Sounds like sour grapes, which is pretty much the only thing of note coming out of Intel lately

This sounds pretty plausible. The windows user is the least likely to understand the implications of arm for their applications in the ecosystem that is the least likely to accommodate any change. Microsoft likes to hedge their bets but generally does not have a reason to prefer arm over x86, their revenue opportunity is the same either way. Application vendors not particularly motivated yet because there’s low market share and no reason to expect windows on x86 to go anywhere.

Just like last time around, windows and x86 are inextricably tied together. Windows is built on decades of backwards compatibility in a closed source world and ARM is anathema to x86 windows application compatibility.

Apple forced processor architecture changes because they wanted them, but Microsoft doesn’t have the motive.

This has next to nothing to do with the technical qualities of the processor, but it’s just such a crappy ecosystem to try to break into on its own terms.

Ironically, their new GPUs are supposedly actually pretty decent. It’s like Bizarro-world over there, LOL!

Their CPUs would also be decent if they only made low end parts

Burn unit to Intel!

Decent only if you look at raw performance for the price compared to other MSRPs.

When you scratch beneath the surface a little and see what they’re having to do to keep up with the 3 year old low end Nvidia and AMD parts (that are due to be replaced very soon), it paints a less rosy picture. They’re on a newer, more expensive node, use a fair bit more power, and have a larger die size by quite a bit than their AMD/Nvidia counterparts.

Add to that Intel doesn’t get the discounts from TSMC that Nvidia and AMD get, and I’m doubtful Battlemage is profitable for Intel (this potentially explains why availability has been so poor - they don’t want to sell too many).

While it’s true the average buyer won’t care about the bulk of that, it does mean Intel is limited in what they can do when Nvidia and AMD release their next generation of stuff within the next few months.

If I can get one I’m buying one. I think their performance/cost ratio is excellent, and will probably make NVidia and AMD bring down their mid-range card prices.

But I’m not forgetting who made the prices come down. I’m all in on supporting a new player in the GPU game, and the 5060 would have to make me grow new teeth or something to get me to give Nvidia money over Intel at this point.

You kinda missed the most important detail: they’re competing with the mid-range (and yes, a 4060 is the midrange) for substantially less money than the competition wants.

I know game nerd types don’t care about that, but if you’re trying to build a $500 gaming system, Intel just dropped the most compelling gpu on the market and, yes, while there’s an upcoming generation, the 60-series cards don’t come out immediately, and when they do, I doubt they’re going to be competing on price.

Intel really does have a six month to a year window here to buy market share with a sufficiently performant, properly priced, and by all accounts good product.

I’m sorry that the facts surrounding Nvidia’s GPUs upset you.

I said that in my comment. And no, 4060 is not midrange lol

4090 48GB

4090

4080 Super

4080

4070 Ti Super

4070 Ti

4070 Super

4070

4060 Ti 16GB

4060 Ti

4060

It’s literally the lowest end GPU they make. The 60-class GPU stopped being midrange for Nvidia with Pascal, although due to Nvidia’s exceptional marketing capability, they’ve tricked people into thinking that’s not the case.

My main complaint isn’t with the performance, but the missed opportunity to release a higher SKU with more RAM. 12GB is enough for gaming with their performance, but adding more would open up other uses, like AI or other forms of compute. Maybe they still will, idk, but I would be totally willing to upgrade my AMD GPU if there was a compelling reason beyond a little better performance. Give me 16 or even 24GB VRAM for $300 or so and I’d buy, even if it’s not “ready” at launch (i.e. software support for AI/compute).

As of now, the GPU is well placed for budget rigs, but I think they could’ve cast their net a bit wider.

Yeah, you’re an enthusiast looking for enthusiast parts.

Try to understand that you’re not the only people in the market or discussion.

And that’s why I said it’s well placed for budget rigs. If I was building a computer today, I’d probably go with the B580.

However, I already have a computer with an RX 6650 XT, and while the B580 is an upgrade (10-15% higher FPS, esp at higher resolutions), it’s not enough to really convince me to upgrade. However, a higher RAM variant would because it adds capabilities that I can’t get with my current card.

Intel needs marketshare, and a high VRAM SKU would get a lot of people talking. They don’t even need to sell a lot of that SKU to make a big difference, it just needs to exist and have decent software support. They could follow it up with an enterprise lineup targeted at AI and GPGPU once the SW ecosystem is solid (which enthusiasts like me will help test).

Tell me about it

I bet he was just saying it to get noticed by Dad.